Mental health study: ChatGPT advice preferred over that of professionals

Artificial intelligence provides good answers to mental health questions. Young people even like ChatGPT’s responses better than healthcare professionals’ advice.

When young people ask about mental health, the answers from ChatGPT are both more useful and more relevant than the answers from healthcare professionals, according to the young study respondents. Healthcare professionals are also satisfied with the answers from artificial intelligence.

Easy to understand

“Professionals and young people both found that ChatGPT was able to provide advice that they perceived as relevant, empathetic and easy to understand,” says SINTEF researcher Marita Skjuve.

Skjuve and her colleagues at SINTEF and the University of Oslo selected real questions that young people posed to a Norwegian charity about their own mental health. Both AI and professionals responded to the questions, specifically, ChatGPT and professionals working for the youth information service ung.no.

The survey participants included 123 youth and 31 health professionals who reviewed the answers. They did not know who answered what. Nor had they been told what the researchers were planning to investigate.

Chat GPT scored higher

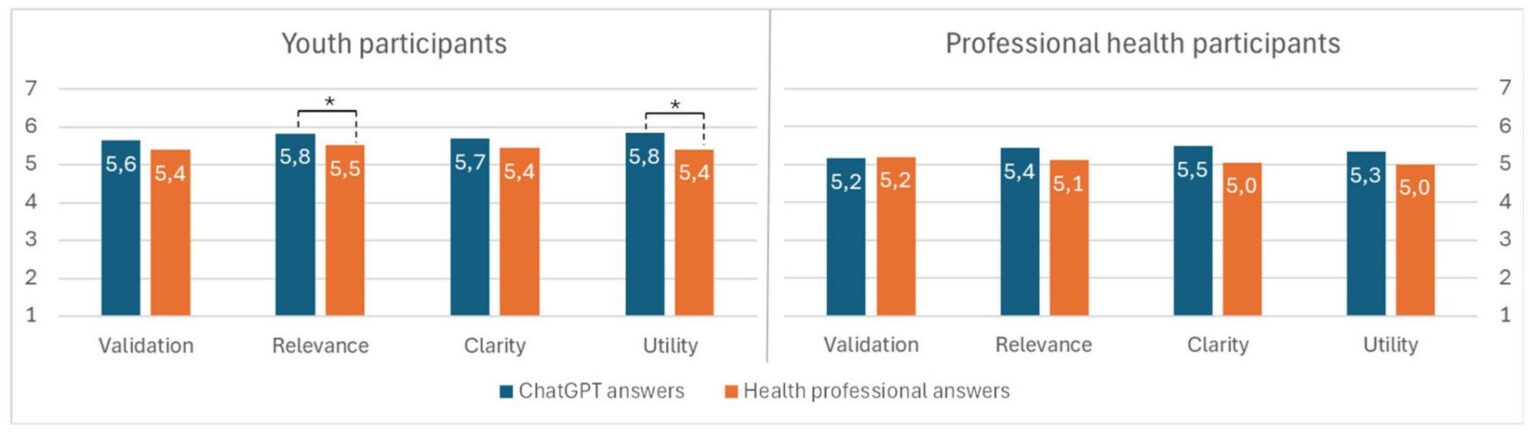

In the blind test, participants were asked to assess how useful, relevant, understandable and empathetic the answers were. They were also asked to choose the answer they liked best and explain why. The young people gave ChatGPT the best grade all the way. The professionals who responded also gave ChatGPT better grades, but here the differences are not as pronounced.

“We observed that young people like answers from ChatGPT a little better because they are easy to understand and are perceived as being immediately useful. The answers describe what the youth can do to solve a possible problem related to their mental health,” says Skjuve.

“And we should also remember that ChatGPT is pretty good at giving neat and clear answers with bullet points,” she says.

Good, relevant, understandable and useful answers? Both young people and health professionals assessed how ChatGPT answered questions about mental health. The young participants were the most positive, but health professionals also thought that the AI answers were explained well. Table: Asbjørn Følstad

The health professionals do not always see it the same way. They tend to be a little more critical of ChatGPT’s diagnostic language. Nor did the professionals always find ChatGPT as validating or empathetic as an answer from a professional was.

“But on the whole, we see that both groups think that ChatGPT provides good answers that can help,” says the researcher.

Diagnosis risk

The study did not assess whether there were any errors in the answers, and the professionals did not point out any such errors. They were not asked to do so, but neither did anyone on their own initiative express that anything was downright wrong.

“AI doesn’t always understand the context and can make up answers. Therefore, quality assurance from health personnel is important in this area,” says Skjuve.

A few people nevertheless pointed out that ChatGPT could have a tendency to try to make a diagnosis. Health professionals who work for aid organizations have to abide by strict guidelines. They are supposed to give advice – but not provide direct health care or make diagnoses. ChatGPT has no such guidelines.

Skjuve wonders whether this could be a reason why ChatGPT is perceived as more practical and useful.

Professionals can learn from AI

The question, then, is whether artificial intelligence like ChatGPT should be used to help with mental health issues.

“What we’ve learned is that ChatGPT is capable of creating answers that young people understand and find easy to read. We humans can learn from that,” says Skjuve. She suggests that perhaps AI can support the work of a professional and help clarify the information for a young person.

In other studies we have seen that AI can often be perceived as responding better than health personnel. AI is often good at responding in a welcoming and empathetic way.

Skjuve can imagine AI as a support tool. It could help professionals respond to young people better and faster. This way, mental health help can be scaled up. The professionals can reach more young people who need help. At the same time, they retain professional control and can assured the quality of the AI answers.

“The last point is very important. AI can often give the wrong answer, and this can be critical in matters of mental health,” says Skjuve. She believes the future may be hybrid services where AI and health personnel work more closely together to formulate good answers.

She thinks the danger lies in young people going to AI to get an answer right away instead of waiting two to three days for a quality-assured response from a health service.

“AI does not always understand the context and makes up answers. That is why quality assurance from healthcare professionals is important in this area,” says Skjuve.

Researcher not surprised

The SINTEF researcher is not really surprised by the findings.

“In other studies we have seen that AI can often be perceived as responding better than health personnel do. AI is often good at responding in a welcoming and empathetic way.”

The researchers have now conducted a follow-up study without a blind test. In this case, the group involved knew who had actually answered the question. It appears that they prefer the answers provided by the health professionals and are more sceptical of AI. The results are not clear and have not yet been published.

Reference:

Marita Skjuve, Asbjørn Følstad and Petter Bae Brandtzæg: ChatGPT as a mental health advisory service: Comparing evaluations from youth and health professionals. Digital Health, February 2026, doi: 10.1177/20552076261427447